Abstract

The goal of this work is association of several machine learning methods in a study of rotating machines with fluid-film bearings. A fitting method is applied to fit a non-linear reaction force in a bearing and solve a rotor dynamics problem. The solution in the form of a simulation model of a rotor machine has become a part of a control system based on reinforcement learning and the policy gradient method. Experimental part of the paper deals with a pattern recognition and fault diagnosis problem. All the methods are effective and accurate enough.

Highlights

- Fault diagnosis in fluid-film bearings.

- Reinforcement learning for rotation machine control.

- Rotor dynamics simulation.

1. Introduction

The main tool in modern machine learning is an artificial neural network (ANN) [1]. This work deals with applications of machine learning to rotating machines with fluid-film bearings. A rotor rotation is usually accompanied by lateral vibrations [2, 3]. Rotor trajectory contains information about the condition of the bearings and the rotor machine at a whole. Rotor dynamics modeling is a difficult task especially when the rotor has fluid-film bearings [2]. Hydrodynamic calculations are computationally expensive. Therefore, this part of the rotor dynamics problem can be implemented using ANNs [4]. Modern rotating machines can be equipped with a number of sensors. Analysis of their measurements can be automated using specialized ANNs. These ANNs implement logistic regression [1]. Both shallow learning and deep learning are used in pattern recognition. Deep convolutional neural networks are widely used in fault diagnosis [5, 6]. Deep learning is emerging in reinforcement learning and continuous control systems [7, 8].

This paper unites theoretical and experimental results achieved by the authors in applications of machine learning to simulation, diagnosis and control of rotating machines with fluid-film bearings.

2. Shallow learning for rotor dynamics simulation and fault diagnosis

The goal of supervised learning is to determine relationship between two sets: an input set and a target set . The difference between predictions and targets is minimized in training process. This error function is called a target function, a cost function, or a loss function.

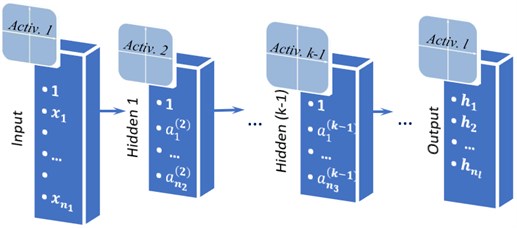

The scheme of a simple feed forward ANN called multilayer perceptron is represented in Fig. 1. The -layer ANN has an input layer, hidden layers and an output layer. A feature of the network architecture is that each neuron of the previous layer transmits its signal to each neuron of the next layer [4].

Input data in the form of numbers can be represented as a one-dimensional matrix. The input layer receives this matrix ) and simply transmits it to the second layer with an additional unit element. The matrix is the output of the first layer. In the second (hidden) layer data from the first layer is multiplied by the weights matrix and added . An activation function is applied to the result , and resulting matrix with additional unit element is the output of the second layer . The same actions take place on an arbitrary hidden layer:

where is matrix with results of multiplication by weights and adding in the current layer, , are matrices of outputs in the previous and the current layers respectively, is the previous layer weights matrix, is an activation function.

Similar calculations occur in the output layer. The result of the calculation in the output layer is the matrix of predictions .

The unknown weights matrices are determined by minimizing the objective function . The type of objective function depends on the type of a given problem.

Fig. 1An l-layer feed forward neural network with n1 inputs and nl outputs

2.1. Multi-dimensional mapping for rotor dynamics simulation

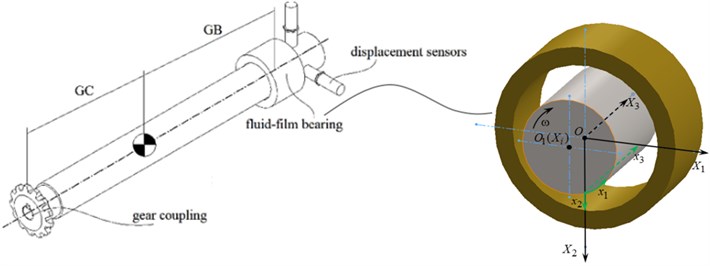

A rigid unbalanced rotor with a gear coupling and a fluid-film bearing at the opposite tips is considered (see Fig. 2). The equations of the rotor’s motion can be represented as follows [3]:

where is rotor mass, , are coordinates of the center of mass and angles of the rotor rotation respectively, is time, , are reaction forces of the gear coupling and the bearing respectively, is inertia force, is the rotor unbalance, is phase of unbalance, , are polar and diametral moments of inertia respectively, , are radius-vectors to the coupling and to the bearing respectively.

It is assumed that reaction forces are equivalent to springs and dampers: , . Also, it is assumed that reaction in a coupling is linear with given linear coefficients and reaction in a bearing is non-linear. Calculation of the bearing reaction is connected with solution of the Reynolds equation [2, 4]:

where are coordinates connected with the thin oil film, is the unknown pressure function, is the oil film thickness, is viscosity, are the tangential and normal components of the journal surface velocity, where , .

Fig. 2Calculation schematic of a rotor-bearing test rig with a fluid-film bearing

Given the pressure function, the components of reaction can be calculated by integration:

where , are the bearing length and diameter respectively, .

Approximation of Eq. (4) with the function of four arguments can be implemented by the ANN represented in Fig. 1. The input layer receives a matrix with components of rotors position and its velocity of lateral vibrations . The output of the ANN is the fluid-film reaction force . A 3-layer feed-forward ANN with sigmoid activation function () in the hidden layer and linear function in the output layer is used to solve multi-dimentional mapping problem. Forward propagation in the ANN includes following calculations:

The number of hidden neurons is arbitrary. Network training takes place on a large number of samples, i.e. pairs of input and output matrices. In mapping problems, the cost function has the following form [1]:

here is number of samples in a dataset, , are predicted and target values of -th output value calculated for the -th sample, , are the numbers of neurons in the -th layer and in the output layer respectively, is a regularization parameter.

In the training process the values of weights and the regularization parameter are calculated. The network is trained with Levenberg-Marquardt backpropagation algorithm. The training process is implemented in one of the specialized programming environments [9, 10].

2.2. Classification and pattern recognition tools for rotating machine diagnosis

The main idea is the same: to determine relationship between inputs and targets . The main difference is that the targets values are discrete and equal to 0 or 1, and predictions approximated by logistic function has continuous values in the interval (0 1) [1].

Sensor measurements are recorded during the tests under various conditions of a rotating machine. Given conditions are needed to be recognized by ANNs. The data from different types of sensors can be normalized [1] and merged into an input matrix . The number of classes in a target matrix is equal to the number of observed conditions of a rotating machine. A 3-layer feed-forward ANN with a sigmoid activation function in the hidden layer (see section 2.1) and a softmax function in the output layer () is used to solve pattern recognition problem. Forward propagation includes following calculations:

As for the previous ANNs architecture (see subsection 2.1) the number of hidden neurons is arbitrary and training process needs a number of training samples. In pattern recognition problems, the cost function has the following form [1]:

The network is trained with scaled conjugate gradient using functions of specialized programming environments [9, 10].

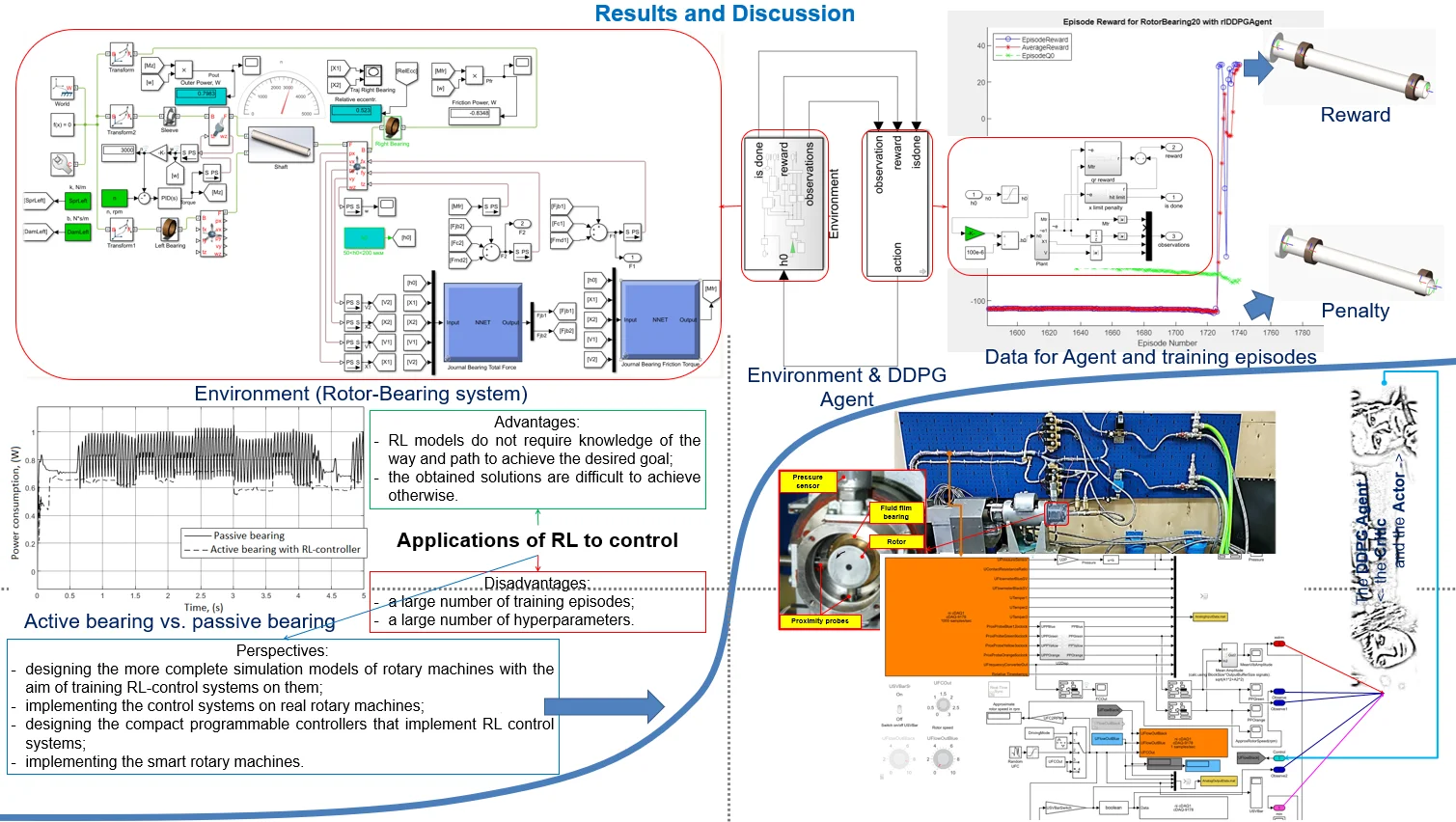

3. Deep reinforcement learning for rotating machine control

The main objective of the control system under study is minimization of energy consumption. The fluid-film reaction and the friction torque in a bearing are non-linear functions depending on pressure distribution (see Eq. (3)). It is assumed that the rotor vibrations described with Eq. (2), the coupling reaction is linear and the bearing reaction is simulated by the ANN described in Subsection 2.1 (see Fig. 2). In terms of reinforcement learning the rotor-bearing simulation model is an envinonment and the control system is an agent. At each time step the agent receives feedback from the environment in form of a matrix of simulation results and takes an action in response in form of pressure supply or any other parameter of a fluid-film bearing. The main idea is to train agent after the event giving him higher reward for better actions.

The deep deterministic policy gradient (DDPG) is used. The algorithm of DDPG agents is represented in [8]. At each time step the value function is calculated as follows [8]:

where is a discount factor, is a -function calculated by a target critic, is a policy function of the action by a target agent, , are unknown parameters of the actor and the critic ANNs respectively.

The architectures of the networks will be represented in the next section of the paper. The unknown parameters are calculated by minimizing the loss function [8]:

The networks are trained with stochastic gradient descent method using functions of specialized programming environments [9, 10].

4. Simulation and experimental results

The first series of simulation tests was performed with a model of the test rig based on Eqs. (2-4). A set of rotor trajectories was calculated. Then the ANN described by Eqs. (5-6) was trained and tested in comparison with a known linear model characterized by the spring and damper matrices [4]. The results demonstrated that the rotor dynamics simulation program with the ANN module allows calculation rotor trajectory two times faster than a real time process. It was demonstrated also that the ANN allows simulation of non-linear transient processes with variable rotor speed and high vibrations [4].

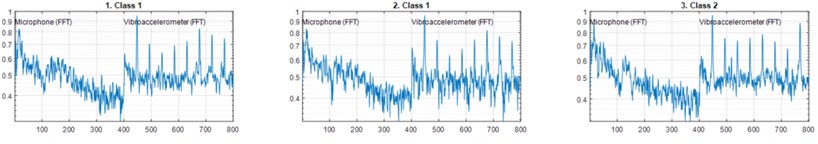

The second series of tests was performed using the test rig with a multi-sensor measurement system. Two displacement sensors measured the rotor vibrations, three vibroaccelerometers measured the rotor and the electromotor housings vibroaccelerations, a microphone measured the operating rotor machine noise. Inside the bearing the pressure sensor measured pressure supply and the contact resistance sensor measured the fluid-film thickness. Six conditions of the test rig were studied, including the normal condition, the conditions with loosened bolts and the rotor unbalance condition. The general classification problem for six classes recognition and the simplified classification problem for two classes recognition (normal or abnormal) were solved. Several random samples of two classes dataset with 800 data points each are shown in Fig. 3

Fig. 3Random samples of two classes dataset with normalized measurements results from the microphone and from one of the vibroaccelerometers

Then the ANN described by Eqs. (7-8) was trained and tested to solve the classification problems. The accuracy of the two classes and the six classes recognition were up to 80 % and 90 % respectively. It should be noted, that the accuracy of the two classes classification by experts was up to 70 %. The value of accuracy means that classification process was close to random.

The third series of the tests was simulation. The rotor dynamics simulation model became an environment observed and controlled by an agent. The agent model was based on the DDPG algorithm (see section 2.3). The ANNs architectures are shown in Fig. 4.

Fig. 4The DDPG networks architectures: a) the actor network and b) the critic network [2]

![The DDPG networks architectures: a) the actor network and b) the critic network [2]](https://static-01.extrica.com/articles/21549/21549-img4.jpg)

a)

![The DDPG networks architectures: a) the actor network and b) the critic network [2]](https://static-01.extrica.com/articles/21549/21549-img5.jpg)

b)

The agent controlled the bearing clearance size (see Eq. (3)) directly, and indirectly the pressure distribution and the reaction forces in the bearing. The goal of the control system was minimization of power loss due to friction and vibration in the bearing. The ANNs were trained and tested. The results demonstrated decreasing the power loss up to 17 % by the DDPG agent.

5. Conclusions

Suggested tools of rotor dynamics simulation, condition classification and control based on artificial neural networks allow development of predictive modeling systems, fault diagnosis and control of energy efficient operation to design intellectual rotating machines. All the developed systems can be combined in one device. The following study is connected with design of the device which will be able to combine multiple functions of predictive modeling, fault diagnosis and control in interaction with a rotating machine.

References

-

Goodfellow Y., Bengio Y, Courville A. Deep Learning. MIT Press, 2016.

-

Hori Y. Hydrodynamic Lubrication. Yokendo Ltd, Tokyo, 2006.

-

Friswell M. I. Dynamics of Rotating Machines. Cambridge University Press, 2010.

-

Kornaev A. V., Kornaev N. V., Kornaeva E. P., Savin L. A. Application of artificial neural networks to calculation of oil film reaction forces and dynamics of rotors on journal bearings. International Journal of Rotating Machinery, Vol. 2017, 2017, p. 9196701.

-

Lei Y., Yang B., Jiang X., Jia F., Li N., Nandi A. K. Applications of machine learning to machine fault diagnosis: a review and roadmap. Mechanical Systems and Signal Processing, Vol. 138, 2020, p. 106587.

-

Liu R., Yang B., Zio E., Chen X. Artificial intelligence for fault diagnosis of rotating machinery: A review. Mechanical Systems and Signal Processing, Vol. 108, 2018, p. 33-47.

-

Busoniu L, Bruin T., Tolic D., Kober J., Palunko I. Reinforcement learning for control: Performance, stability, and deep approximators. Annual Review in Control, Vol. 46, 2018, p. 8-28.

-

Lillicrap T. P., Hunt J. J., Pritzel A., Heess N., Erez T., Tassa Y., Silver D., Wierstra D. Continuous control with deep reinforcement learning. International Conference on Learning Representations, 2016.

-

Mathworks: help center. Nnstart tool, https://www.mathworks.com.

-

Keras API, https://keras.io/api/.

About this article

This work was supported by the Russian Science Foundation under the Project No. 16-19-00186. The authors gratefully acknowledge this support. Authors would also like to thank A. Rodichev, A. Fetisov, Y. Kazakov and S. Popov for the multi-sensory test rig development.