Abstract

This paper presents a two-dimensional approximately harmonic projection (2DAHP) algorithm for gait recognition. 2DAHP is originated from the approximately harmonic projection (AHP), while 2DAHP offers some advantages over AHP. 1) 2DAHP can preserve the local geometrical structure and cluster structure of image data as AHP. 2) 2DAHP encodes images as matrices or second-order tensors rather than one-dimensional vectors, so 2DAHP can keep the correlation among different coordinates of image data. 3) 2DAHP avoids the singularity problem suffered by AHP. 4) 2DAHP runs faster than AHP. Extensive experiments on gait recognition show the effectiveness and efficiency of the proposed method.

1. Introduction

Recently, the average silhouettes-based human gait recognition has received extensive attention due to its potential applications in many fields [1-4], such as identity authentication and video surveillance. In general, a binary silhouette image of size 128×88 in the USF HumanID gait database is represented as a vector in the image space . Consequently, a major challenge of gait recognition is that the captured gait image often lies in a high-dimensional image space. Due to the consideration of the curse of dimensionality, a common way to resolve this problem is to use dimensionality reduction techniques. Once we obtain lower-dimensional representations of the original gait images, the traditional classification methods can be applied in the reduced feature space. Therefore, the main objective of this paper is to find techniques that can introduce lower-dimensional feature representations of gait images with enhanced discriminatory power.

The most representative algorithms for dimensionality reduction are principal component analysis (PCA) and linear discriminant analysis (LDA) [5]. Although PCA and LDA have been successfully applied to face recognition, image retrieval, and gait recognition, they are designed for discovering only the global Euclidean structure, whereas the local manifold structure is ignored. In fact, the global statistics such as variance is often difficult to compute when there are no sufficient samples. In addition, a number of research efforts have shown that the images possibly reside on a nonlinear submanifold and the representation of image is fundamentally related to the problem of manifold learning [6-9]. Given a set of high-dimensional data points, manifold learning techniques aim to discover the geometric properties of the data space. In the past years, a number of manifold learning algorithms have been developed, representative algorithms include locally linear embedding (LLE) [10], ISOMAP [11], and Laplacian eigenmaps (LE) [12]. LLE is designed to maintain the local linear reconstruction relationship among neighboring points in the lower-dimensional space. ISOMAP aims to preserve global geodesic distances of all pairs of samples. LE aims to preserve proximity relationships by manipulations on an undirected weighted graph, which indicates neighbor relations of pairwise samples. These nonlinear methods do yield impressive results on some artificial benchmarks and several real applications. However, they suffer from the out of sample problem, i.e., they can only obtain mappings that are defined on the training data points and how to explicitly calculate the mappings on novel testing data points remains unclear. Therefore, these nonlinear manifold learning algorithms might not be suitable for gait recognition. To cope with the out of sample problem, locality preserving projection (LPP) [13] applies a linearization procedure to construct explicit mappings over new samples. In the recent research, Lin et al. [14] point that utilizing the affine hulls of the manifold and the connected components is more effective for preserving the local geometrical structure and cluster structure of original data, and propose a new algorithm termed approximately harmonic projection (AHP) for dimensionality reduction. AHP is a linear manifold learning method based on the harmonic framework, and the optimal transformation can be obtained by approximating the Dirichlet integral. It is worth noting that all these methods unfold input data into vectors before dimensionality reduction. But images are naturally in the form of second-order or higher-order tensors [15-17]. For example, gray-level images represent second-order (matrix), and Gabor-filtered image represents third-order tensors. Consequently, such kind of vectorization largely increases the computational costs and seriously destroys the intrinsic tensor structure of images. To cope with these issues, multilinear extensions of PCA, LDA, and LPP, namely 2DPCA [18], 2DLDA [19], and 2DLPP [20] are proposed, respectively. These methods aim to conduct subspace analysis by directly encoding images as two-dimensional image matrices rather than one-dimensional vectors. The advantages of using image-as-matrix representation have been indeed consistently pointed out in a number of recent research efforts [15-20], especially when the number of training samples is small. Nevertheless, the multilinear (tensor) extension of AHP and its application to gait recognition are still a research area where few people have tried to explore.

This paper represents a gray-level average silhouette image of size as the matrix (or second-order tensor) in the tensor space . Then a two-dimensional approximately harmonic projection (2DAHP) is proposed by tensorizing AHP. Compared with the original AHP, 2DAHP can directly process gait images in their original matrix form and utilize correlations among pixels within different dimensions (i.e., rows and columns). Moreover, the smaller number of data entries along each data dimension facilitates subspace learning with limited training data. 2DAHP is much more computational efficient since the decomposed matrices are of size or , which is much smaller than that of size in AHP. 2DAHP can avoid the singularity problem. In addition, the trace ratio optimization technique is also applied to efficiently solve 2DAHP.

The remainder of this paper is organized as follows. Section 2 briefly reviews AHP. Section 3 introduces our proposed 2DAHP algorithm. Experimental results on gait recognition are presented in Section 4. The concluding remarks are provided in Section 5.

2. Brief review of approximately harmonic projection (AHP)

AHP is a recently proposed linear manifold learning method for dimensionality reduction [14]. It is based on the approximate affine hull and explicitly utilizes the edge length to reflect the geometrical structure of the manifold structure of the data space.

Given a set of data points , let . Let and be two weight matrices defined on the data points. The optimal projection of AHP can be obtained by solving the following minimization problem:

with the constraint:

where represents an edge vector that has an orientation from to denotes the length of the edge between and , is the arc length of . and are two matrices defined as follows: if and are connected, then and otherwise, and are two diagonal matrices defined as , . denotes the gradient on each edge, its definition is as follows:

Unlike the standard spectral graph methods which mainly consider the connectivity of graph, AHP explicitly makes use of the edge length and edge orientation which reflect the geometrical structure of the manifold. Therefore, AHP can precisely model multiple connected components of the data manifold, which is especially important for discriminating data with different submanifold (cluster) structure.

The objective function in AHP aims to use the approximate affine hull of the graph to separate data points sampled from different components. Therefore, minimizing it is to ensure that if and lie in the multiple connected components, then and are made close by the optimal projection. Finally, the projection vector that minimizes (1) is given by the minimum eigenvalue solution to the generalized eigenvalue problem:

Note that, in the appearance-based image analysis, one is often confronted with the fact the dimension of image vector is much smaller than the number of images. Thus, the matrix is singular. To avoid the singularity problem, one may first apply PCA to remove the components corresponding to zero eigenvalues. Thus, the projection vector of AHP can be considered as the eigenvectors of the matrix associated with the smallest eigenvalues. In addition, since is not usually symmetric, the AHP projection axes are not orthogonal.

Let the column vector of be the solution of (4) ordered according to their eigenvalues . Thus, the embedding is given by , where is a -dimensional vector and is an matrix.

3. Two-dimensional approximately harmonic projection (2DAHP)

Given a set of data points in the second-order tensor (or matrix) space , let be an orthonormal basis of and be an orthonormal basis of , it has been shown that forms a basis of the tensor space [20]. Thus, a second-order tensor can be uniquely defined as .

Given a set of data points in , two-dimensional approximately harmonic projection (2DAHP) aims to find two projection matrices and that maps each data point to a lower-dimensional matrix representation by such that represents .

Let and be the projection matrices, according to (1) and (2), the optimal objective function of 2DAHP with the matrix representation can be rewritten as follows:

with the constraint:

where is similarly defined as AHP.

Let and be a diagonal matrix, . Since , we have:

where and . Similarly, , so we can also obtain:

where and Consequently, we should simultaneously minimize and .

In addition, similar to the above derivation process, the left side of constraint function equation (6) can be converted to:

where , , , and is a diagonal matrix, .

Finally, the optimal objective function (5) subject to (6) can be transformed as:

Because of difficulty in solving the optimal and simultaneously, we follows the similar computational methods as [20] to compute and iteratively. We first initialize with an identity matrix, then can be approximately computed with generalized eigenvalue decomposition (GED) by transforming the optimal objective function (11) into the tractable ratio trace form . That is, can be regarded as the eigenvectors associated with the minimum eigenvalues of the following generalized eigenvector problem:

Once is obtained, similarly, we can update by solving the following generalized eigenvector problem:

Therefore, we can obtain the final optimal and by iteratively solving the generalized eigenvector problems (12) and (13).

In the preceding section, we approximately computed the the optimal objective functions of (10) and (11) by converting them into ratio trace problems, which are solved by GED. However, the obtained solutions may deviate from the original objectives, which may lead to uncertainty in subsequent classification [21]. To address these problems, we describes how to directly solve (10) and (11) with the Iterative algorithm for the Trace Ratio (ITR) optimization problem introduced in [21]. To compute , we first fix and initialize as an arbitrary columnly orthogonal matrix. In each iterative step, we solve a trace difference problem , where is the trace ratio value calculated from the projection matrix of the previous step, i.e., . Once is obtained, similarly, we can update by solving where . Finally, output the final and when the iterative algorithm converges to optimal solutions. The detailed iteration algorithm for solving (10) and (11) can be presented as follows:

Algorithm1: The iteration algorithm for directly solving the optimal problem (10) and (11) in 2DAHP.

Step 1: Initialize and as two arbitrary column-wise orthogonal matrices.

Step 2: For , do

Step 2.1: Calculate the trace ratio value according to the projection matrix :

Step 2.2: Obtain the new by solving the following eigen-decomposition problem:

Step 2.3: For the given , calculate the trace ratio value according to the projection matrix :

Step 2.4: Obtain the new by solving the following eigen-decomposition problem:

where are the smallest eigenvalues, and is the eigenvector associated with eigenvalue , which constitutes the ith column vector of the matrix .

Step 2.5: If and , then break.

Step 3: Output the projection matrices and .

From the above algorithemic procedure, it can be easily observe that the obtained projection matrices and are orthogonal. In addition, following the conclusions in [21], the above iteration algorithm will converge to an optimal value. For more details about proof of convergence, please refer to [21].

4. Experimental results

In this section, to investigate the performance of our proposed 2DAHP algorithm for gait recognition, we compare the 2DAHP algorithm with 2DPCA [18], 2DLDA [19], 2DLPP [20], and the original AHP [14] algorithms for gait recognition on the well-known USF HumanID gait database, where 2DPCA, 2DLDA, and 2DLPP are three popular tensor methods in face recognition and gait recognition, and the original AHP algorithm is a vector-based algorithm. The settings of these compared algorithms are identical to the description in the corresponding papers. In addition, to cope with the singular problem existed in the original AHP, we apply PCA to remove the components corresponding to zero eigenvalues before carrying out AHP. For 2DAHP, we empirically set the optimal iteration number as 5 for each probe set, since the latter experimental results show that the 2DAHP algorithm converges quite quickly.

The USF HumanID gait database is constructed by Sarkar et al. [1], it contains 1870 sequences from 122 individuals walking on an elliptical path in front of two cameras. This database provided one gallery set containing the sequences from 122 individuals and 12 probe sets containing different numbers of individuals varying from 33 to 122 for algorithm training and testing, respectively. More details information about the USF HumanID gait database can be found in [1]. In this gait database, we consider sequences of binary silhouette images. As in [22] and [23], we construct the average silhouette-based gait image representation: First, a complete sequence is partitioned into several subsequences according to the gait period length provided by Sarkar et al. [1]. Then, the binary silhouette images within each gait cycle of a sequence are averaged to acquire several gray-level average silhouette images according to:

where represents the binary images for one sequence with frames, denotes the largest integer less than or equal to . Since numerous researches have experimentally shown that the average silhouette image is more effective and efficient than the original binary silhouette image for human gait recognition, we also utilize the average silhouette image for gait recognition. Fig. 1 shows some original binary images and the average silhouette images of two different individuals, where the first seven images and the last image in each row denote the binary silhouette images and the average silhouette images, respectively. As can be seen, different individuals have different average silhouette images.

To perform gait recognition, we first obtain the average silhouette image subspaces by dimensionality reduction algorithms. Then, all the averaged images from both the gallery set and probe sets are projected into the image subspaces. Finally, the nearest-neighbor classifier is adopted to identify new average silhouette images, where the distance measure uses the median operator for its robust to noise [1, 23]:

where , and , are the lower representations from one probe sequence and one gallery sequence, respectively, and denote the total number of average silhouette images. For each dimensionality reduction algorithm, we only show its performance in the or dimensional subspace. For each case, we average the results over 20 random splits of training and testing sets.

Fig. 1Some original binary images and the average silhouette images of two different individuals in the USF HumanID gait database

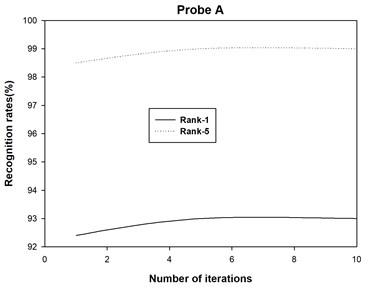

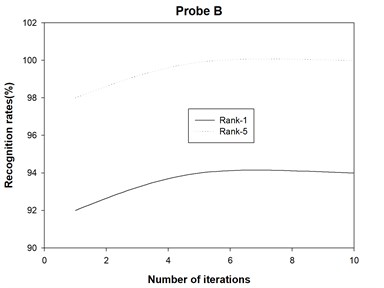

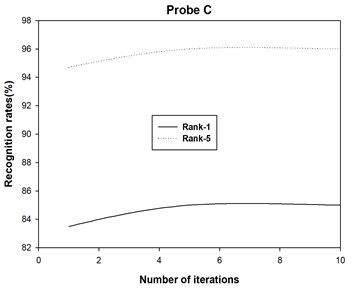

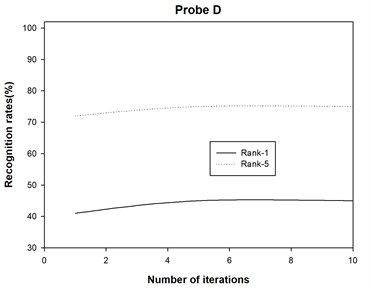

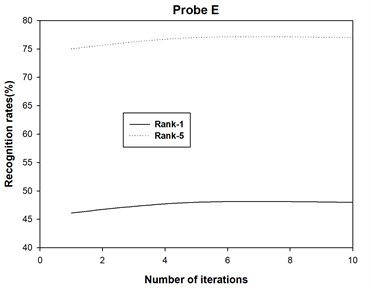

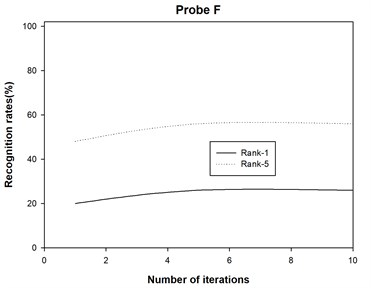

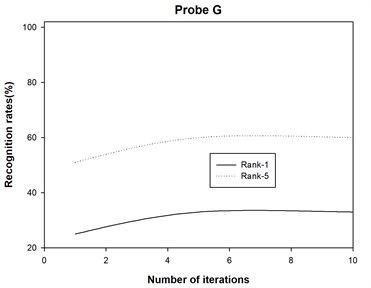

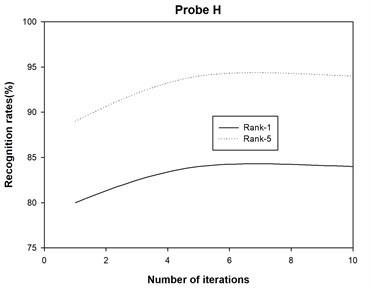

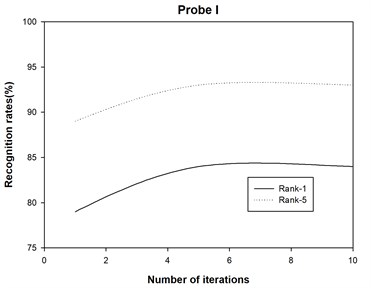

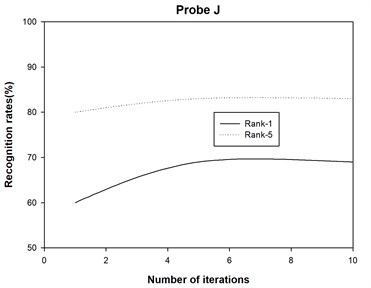

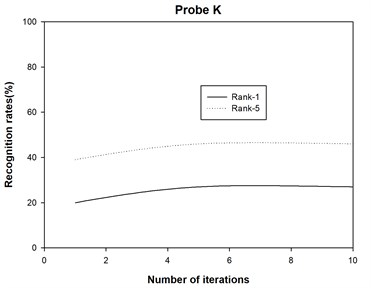

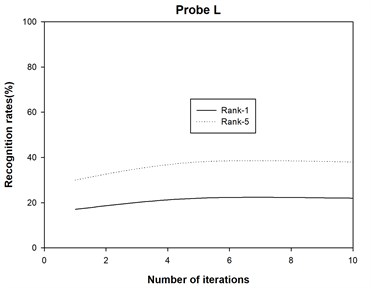

The recognition accuracies are shown in Table 1 and Table 2, where Rank-1 indicates that the correct subject is ranked as the top candidate, Rank-5 means that the correct subject is ranked among the top five candidates, and Average denotes the recognition rate among all the probe sets. Moreover, we also plot the recognition rate variance with different numbers of iterations for probe sets A, B, C, D, E, F, G, H, I, J, K and L in Fig. 2. Finally, we report the running times of 2DAHP and AHP in Table 3.

From the experimental results listed in Table 1-3 and Fig. 2, we can have the following observations:

1) Our proposed 2DAHP consistently outperforms the 2DPCA, 2DLDA, 2DLPP, and AHP algorithms, which demonstrates that it is beneficial to use simultaneously two-dimensional matrix representation as well as the local geometrical structure and cluster structure for gait recognition.

2) 2DPCA performs the worst among the compared algorithms. A possible explanation is as follows: similar to the traditional PCA, the 2DPCA is simply achieves object reconstruction and it is not necessarily useful for discriminating gait images with different subjects which is the ultimate goal of gait recognition.

3) The 2DLDA performs comparatively to 2DLPP. This demonstrates that it is hard to evaluate whether local manifold structure or class label information is more important, which is consistent with existing studies.

4) The 2DAHP algorithm converges quite quickly for probe sets A, B, C, D, E, F, G, H, I, J, K and L, and recognition rates changes slightly with different iteration numbers. After about 5 iterations, 2DAHP can converge to the optimal solution in all 12 probe sets.

5) 2DAHP achieves significant speed up comparing to AHP. Theses results are consistent with the theoretical analysis of the efficiency, i.e., 2DAHP can utilize the intrinsic tensor structure of gait images to improve running efficiency.

Table 1Performance comparison in terms of Rank-1 recognition results (%)

Probe | A | B | C | D | E | F | G | H | I | J | K | L | Average |

2DPCA | 86 | 87 | 75 | 26 | 27 | 18 | 19 | 56 | 61 | 53 | 10 | 11 | 44.1 |

2DLDA | 89 | 91 | 82 | 33 | 33 | 23 | 25 | 67 | 78 | 67 | 19 | 19 | 52.2 |

2DLPP | 90 | 92 | 81 | 34 | 36 | 22 | 24 | 69 | 82 | 65 | 18 | 20 | 52.8 |

AHP | 88 | 90 | 78 | 32 | 40 | 21 | 20 | 64 | 79 | 61 | 13 | 15 | 50.1 |

2DAHP | 93 | 94 | 85 | 45 | 48 | 26 | 33 | 84 | 84 | 69 | 27 | 22 | 59.2 |

Fig. 2Some original binary images and the average silhouette images of two different individuals in the USF HumanID gait database

a)

b)

c)

d)

e)

f)

g)

h)

i)

j)

k)

l)

Table 2Performance comparison in terms of Rank-5 recognition results (%)

Probe | A | B | C | D | E | F | G | H | I | J | K | L | Average |

2DPCA | 89 | 94 | 88 | 51 | 53 | 44 | 42 | 80 | 79 | 64 | 22 | 17 | 60.3 |

2DLDA | 97 | 99 | 95 | 58 | 57 | 50 | 50 | 86 | 93 | 77 | 43 | 40 | 70.5 |

2DLPP | 95 | 98 | 93 | 59 | 60 | 48 | 51 | 88 | 91 | 75 | 45 | 41 | 70.3 |

AHP | 91 | 95 | 89 | 60 | 59 | 49 | 48 | 85 | 82 | 69 | 29 | 26 | 65.2 |

2DAHP | 99 | 100 | 96 | 75 | 77 | 56 | 60 | 94 | 94 | 83 | 46 | 38 | 76.4 |

Table 3Running time(s) comparison on the USF HumanID gait database

Probe | A | B | C | D | E | F | G | H | I | J | K | L |

AHP | 2.51 | 1.17 | 1.18 | 2.64 | 1.29 | 2.48 | 1.32 | 2.41 | 1.31 | 2.40 | 1.06 | 1.08 |

2DAHP | 0.32 | 0.15 | 0.15 | 0.34 | 0.21 | 0.30 | 0.19 | 0.30 | 0.18 | 0.28 | 0.10 | 0.11 |

5. Conclusions

This paper introduces a tensor dimensionality reduction algorithm called two-dimensional approximately harmonic projection (2DAHP). Compared with the original AHP, 2DAHP can directly conducts subspace analysis by encoding an image as a two-dimensional matrix and has higher computational efficiency. Experimental results on gait recognition have demonstrated the effectiveness and efficiency of our proposed approach.

References

-

Sarkar S., Phillips P. J., Liu Z., Vega I. R., Grother P., Bowyer K. W. The HumanID gait challenge problem: data sets, performance, and analysis. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 27, Issue 2, 2005, p. 162-177.

-

Han J., Bhanu B. Individual recognition using gait energy image. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 28, Issue 2, 2006, p. 316-322.

-

Wang L., Tan T., Ning H., Hu W. Silhouette analysis-based gait recognition for human identification. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 25, Issue 12, 2003, p. 1505-1518.

-

Xu D., Yan S., Tao D., Lin S., Zhang H.-J. Marginal fisher analysis and its variants for human gait recognition and content based image retrieval. IEEE Transactions on Image Processing, Vol. 16, Issue 11, 2007, p. 2811-2821.

-

Duda R. O., Hart P. E., Stork D. G. Pattern Classification. Second Edition, Wiley-Interscience, Hoboken, 2000.

-

He X., Yan S., Hu Y., Niyogi P., Zhang H.-J. Face recognition using laplacianfaces. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 27, Issue 3, 2005, p. 328-340.

-

Cai D., He X., Han J., Zhang H.-J. Orthogonal laplacianfaces for face recognition. IEEE Transactions on Image Processing, Vol. 15, Issue 11, 2006, p. 3608-3614.

-

Yan S., Xu D., Zhang B., Zhang H.-J., Yang Q., Lin S. Graph embedding and extensions: a general framework for dimensionality reduction. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 29, Issue 1, 2007, p. 40-51.

-

Mu Y., Tao D. Biologically inspired feature manifold for gait recognition. Neurocomputing, Vol. 73, Issue 4-6, 2010, p. 895-902.

-

Roweis S. T., Saul L. K. Nonlinear dimensionality reduction by locally linear embedding. Science, Vol. 290, Issue 5500, 2000, p. 2323-2326.

-

Tenenbaum J. B., Silva V., Langford J. C. A global geometric framework for nonlinear dimensionality reduction. Science, Vol. 290, Issue 5500, 2000, p. 2319-2323.

-

Belkin M., Niyogi P. Laplacian eigenmaps for dimensionality reduction and data representation. Neural Computation, Vol. 15, Issue 6, 2003, p. 1373-1396.

-

He X., Niyogi P. Locality preserving projections. Advances in Neural Information Processing Systems, 2003, p. 585-591.

-

Lin B., He X., Zhou Y., Liu L., Lu K. Approximately harmonic projection: theoretical analysis and an algorithm. Pattern Recognition, Vol. 43, Issue 10, 2010, p. 3307-3313.

-

Tao D., Li X., Wu X., Maybank S. General tensor discriminant analysis and Gabor features for gait recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 29, Issue 10, 2007, p. 1700-1715.

-

Xu D., Yan S., Tao D., Zhang L., Li X., Zhang H.-J. Human gait recognition with matrix representation. IEEE Transactions on Circuits and Systems for Video Technology, Vol. 16, Issue 7, 2006, p. 896-903.

-

Yan S., Xu D., Yang Q., Zhang L., Tang X., Zhang H. Multilinear discriminant analysis for face recognition. IEEE Transactions on Image Processing, Vol. 16, Issue 1, 2007, p. 212-220.

-

Yang J., Zhang D., Frangi A. F., Yang J. Y. Two-dimensional PCA: a new approach to appearance-based face representation and recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 26, Issue 1, 2004, p. 131-137.

-

Ye J., Janardan R., Li Q. Two-dimensional linear discriminant analysis. Neural Information Processing Systems, 2005, p. 1569-1576.

-

He X., Cai D., Niyogi P. Tensor subspace analysis. Advances in Neural Information Processing Systems, 2005, p. 499-506.

-

Wang H., Yan S., Xu D., Tang X., Huang T. Trace ratio vs. ratio trace for dimensionality reduction. Proceedings of the 2007 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2007, p. 1-8.

-

Han J., Bhanu B. Statistical feature fusion for gait-based human recognition. Proceedings of the 2004 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2004, p. 842-847.

-

Liu Z., Sarkar S. Simplest representation yet for gait recognition: averaged silhouette. Proceedings of the 17th International Conference on Pattern Recognition, 2004, p. 211-214.

About this article

This work is supported by NSFC (Grant No. 70701013), the National Science Foundation for Post-Doctoral Scientists of China (Grant No. 2011M500035), and the Specialized Research Fund for the Doctoral Program of Higher Education of China (Grant No.20110023110002).